Bayesian Networks (Jupyter NB)

Contents

Bayesian Networks (Jupyter NB)¶

Note

This page is based on the Bayesian networks ProbLog tutorial, which is executed from within Python using the ProbLog library.

Content format

This page is written in the Jupyter Notebook format.

The ProbLog content is executed directly from Python.

Alternatively, see the Markdown Notebook version of the

Bayesian Networks tutorial for an example of executing ProbLog code

with ipywidgets; the Percent Notebook version to see

how ProbLog code can be executed with a bespoke

iPython cell magic command; or the

Markdown Notebook version based on the Sphinx-ProbLog

extension, which uses native, interactive ProbLog code boxes.

You can execute the code blocks enclosed below by launching this page as a Jupyter Notebook with MyBinder – this option is available from the Launch Menu that appears after hovering the mouse cursor over the icon shown in the top bar. You can also enable the code cells to work directly on this page with Thebe by activating Live Code from the Launch Menu.

In addition to this book page and the corresponding Jupyter Notebook,

static and interactive reveal.js slides are built from the page source.

The static slides can be accessed with the Static Slides (Jupyter NB) link

listed in the left panel (the table of content) or with this button

;

then open RISE by clicking the button located in the top bar

of the Jupyter Notebook interface.

Tip

This page includes a number of Python cells holding code needed to set up ProbLog ipywidgets. You can reveal their content by clicking the buttons, which appear towards the right edge of this page.

import matplotlib.pyplot as plt

from problog.program import PrologString

from problog import get_evaluatable

def plot_outcome(pl_dict):

x = list(pl_dict.keys())

y = [pl_dict[key] for key in x]

x = [str(key) for key in x]

plt.barh(x, y, height=.5)

plt.xlim([0, 1.15])

plt.ylim([-.5, len(x) - .5])

for i, v in enumerate(y):

plt.text(v + .02, i + .0, '{:.2f}'.format(v), fontweight='bold')

def evaluate_problog(programme):

p = PrologString(programme)

d = get_evaluatable().create_from(p).evaluate()

plot_outcome(d)

We illustrate the use of Bayesian networks in ProbLog using the famous Earthquake example.

Suppose there is a burglary in our house with probability 0.7 and an earthquake with probability 0.2. Whether our alarm will ring depends on both burglary and earthquake:

if there is a burglary and an earthquake, the alarm rings with probability 0.9;

if there is only a burglary, it rings with probability 0.8;

if there is only an earthquake, it rings with probability 0.1;

if there is neither a burglary nor an earthquake, the alarm doesn’t ring.

To model this as a Bayesian network, one would use three random variables, burglary, earthquake and alarm, with burglary and earthquake being parents of alarm. To model this in ProbLog, there are two possible solutions: using ‘plain’ ProbLog or using some syntactic sugar called probabilistic clauses and annotated disjunctions. We now explain both solutions.

digraph alarm1 { burglary -> alarm; earthquake -> alarm; }

ProbLog syntax documentation

Probabilistic facts¶

In ‘plain’ ProbLog, we can encode the Bayesian network as follows.

Since burglary and earthquake are random variable without parents, we can simply encode them as probabilistic facts, with the proper probability.

To express the dependence of the random variable alarm on its parents burglary and earthquake, we use one Prolog rule for every possible state of the parents.

The first rule covers the case in which burglary and earthquake are both true. The required rule is

alarm :- burglary, earthquake, p_alarm1, withp_alarm1an auxiliary atom defined by means of the probabilistic fact0.9::p_alarm1. The point of adding this atom is to ensure that the probability of alarm in this case will be 0.9 as required.The second rule covers the case that burglary is true but earthquake is false. Note that earthquake being false is encoded using the “+” symbol for negation (as in regular Prolog).

The third rule covers the case that burglary is false and earthquake is true.

The fourth case (burglary and earthquake are both false) does not require a rule. This is because, according to our Bayesian network, the probability of alarm is 0 in this case.

We obtain the following ProbLog program.

probabilistic_facts = (

"""0.7::burglary.

0.2::earthquake.

0.9::p_alarm1.

0.8::p_alarm2.

0.1::p_alarm3.

alarm :- burglary, earthquake, p_alarm1.

alarm :- burglary, \+earthquake, p_alarm2.

alarm :- \+burglary, earthquake, p_alarm3.

evidence(alarm,true).

query(burglary).

query(earthquake)."""

)

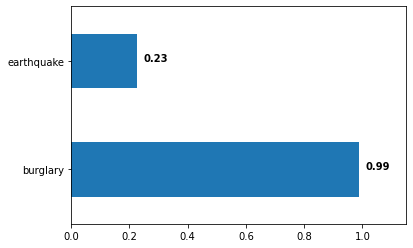

evaluate_problog(probabilistic_facts)

When pressing ‘Evaluate’, ProbLog2 calculates the probability of there being a burglary or an earthquake, given the evidence that the alarm rang. The resulting marginals are: \(P(burglary)=0.9896\) and \(P(earthquake)=0.2275\).

Probabilistic clauses¶

While the above is a correct encoding of the given Bayesian network, it is perhaps not very intuitive due to the auxiliary atoms. Fortunately, ProbLog2 offers some syntactic sugar called probabilistic clauses to encode this in a more readable way. Above, we encoded the information that the conditional probability of an alarm given a burglary and an earthquake equals 0.9 using the rule alarm :- burglary, earthquake, p_alarm1, plus the probabilistic fact 0.9::p_alarm1. We can replace both with a single probabilistic clause of the form 0.9::alarm :- burglary, earthquake. This should be read as: if burglary and earthquake are true, this causes alarm to become true with probability 0.9 if there is a burglary and an earthquake. As this example illustrates, a probabilistic clause has a body, just like regular ProbLog rules, and a head. The difference is that now, the head is annotated with a probability. By also using probabilistic clauses for the other rules in the ProbLog encoding of the Bayesian network, we get the following program.

probabilistic_clauses = (

"""0.7::burglary.

0.2::earthquake.

0.9::alarm :- burglary, earthquake.

0.8::alarm :- burglary, \+earthquake.

0.1::alarm :- \+burglary, earthquake.

evidence(alarm,true).

query(burglary).

query(earthquake)."""

)

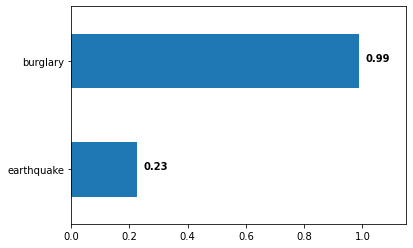

evaluate_problog(probabilistic_clauses)

As you can verify by pressing ‘Evaluate’, this returns the same marginals as the ‘plain’ ProbLog encoding: \(P(burglary)=0.9896\) and \(P(earthquake)=0.2275\). This is not a coincidence: the two programs are equivalent (but the program with probabilistic clauses has the advantage of not needing any auxiliary atoms).

First-order¶

To illustrate the use of first-order ProbLog programs, we show below a first-order extension of the Alarm example.

digraph alarm2 { burglary -> alarm; earthquake -> alarm; alarm -> “calls(john)”; alarm -> “calls(…)”; alarm -> “calls(mary)”; }

Suppose there are \(N\) people and each person independently calls the police with a certain probability, depending on the alarm ringing or not: if the alarm rings, the probability of calling is 0.8, otherwise it is 0.1. This can be modelled as follows. We again use probabilistic clauses and show the case \(N=2\) (two people).

first_order = (

"""person(john).

person(mary).

0.7::burglary.

0.2::earthquake.

0.9::alarm :- burglary, earthquake.

0.8::alarm :- burglary, \+earthquake.

0.1::alarm :- \+burglary, earthquake.

0.8::calls(X) :- alarm, person(X).

0.1::calls(X) :- \+alarm, person(X).

evidence(calls(john),true).

evidence(calls(mary),true).

query(burglary).

query(earthquake)."""

)

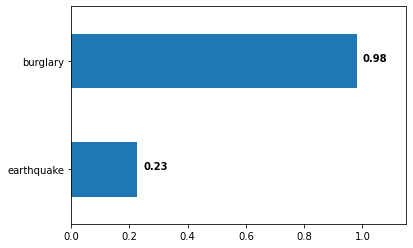

evaluate_problog(first_order)

When pressing ‘Evaluate’, ProbLog2 calculates the probability of there being a burglary or an earthquake, given the evidence that both john and mary called. We obtain \(P(burglary)=0.981939\) and \(P(earthquake)=0.226851\).

In general, any Boolean Bayesian network can be modeled in ProbLog using the above methodology. This can also be extended to non-Boolean Bayesian networks (in which some variables can take more than two possible values), by using annotated disjunctions with multiple atoms in the head.

Annotated disjunctions: Dealing with multi-valued variables¶

Since the random variables in the Bayesian network are all Boolean, we only need a single literal in the head of the rules. We can extend the Bayesian network to have a multi-valued variable by indicating the severity of the earthquake. The literal earthquake now has three possible values none, mild, heavy instead of previously two (no or yes). The probabilities of each value is denoted by the annotated disjunction in 0.01::earthquake(heavy); 0.19::earthquake(mild); 0.8::earthquake(none). An annotated disjunction is similar to a probabilistic disjunction, but with a different head. Instead of it being one atom annotated with a probability, it is now a disjunction of atoms each annotated with a probability.

annotated_disjunctions = (

"""person(john).

person(mary).

0.7::burglary.

0.01::earthquake(heavy); 0.19::earthquake(mild); 0.8::earthquake(none).

0.90::alarm :- burglary, earthquake(heavy).

0.85::alarm :- burglary, earthquake(mild).

0.80::alarm :- burglary, earthquake(none).

0.10::alarm :- \+burglary, earthquake(mild).

0.30::alarm :- \+burglary, earthquake(heavy).

0.8::calls(X) :- alarm, person(X).

0.1::calls(X) :- \+alarm, person(X).

evidence(calls(john),true).

evidence(calls(mary),true).

query(burglary).

query(earthquake(_))."""

)

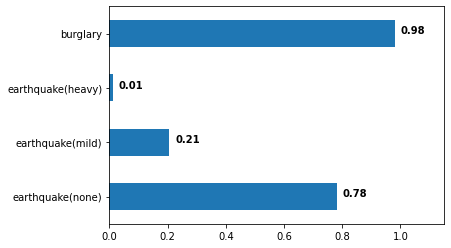

evaluate_problog(annotated_disjunctions)